Over the past several years, Finnish media theorist Jussi Parikka’s work has received widespread attention in the academic and art worlds alike. Besides contributing to the international foundation for what has been called “German Media Theory” with his work on media archaeology and his editing of Berlin-based media theorist Wolfgang Ernst, among others, Parikka has written on network politics, the dark sides of internet culture, and media ecology.

Together with Digital Contagions and Insect Media, his most recent short book The Anthrobscene and the forthcoming A Geology of Media constellate a body of work that triangulates the world of planetary computation on many levels. From investigations of biological resonances in the design of media technologies—viruses, swarms, insects—to electronic waste, future fossils, and the significance of rare earth minerals, Parikka describes the complex layers that constitute media knowledge production under the technological condition of the anthropocene with academic rigor and artistic elegance. Currently, he works as professor of technological culture and aesthetics at Winchester School of Art.

In the following conversation, Parikka and I address themes of insect media, the materiality of media culture, and other issues that relate to the conjunction of aesthetics, politics, and technology.

—Paul Feigelfeld

Paul Feigelfeld: You have constructed and analyzed multiple media archaeological layers over the last decade: digital contagions and viruses, technological waste, insect and animal analogies in media, and the geological, geopolitical, and climatic relevance of the present and future technological condition. Can you tell me a little bit about the relations and frictions that these layers have with each other?

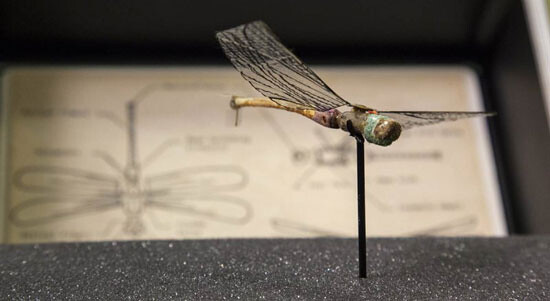

Jussi Parikka: Insect Media is a book about animals, media theory, and how metaphors stem from material culture. The way in which insect-related notions such as swarms, distributed intelligence, hive minds, and computer graphics formations such as boids, the artificial life algorithm, and US military robotics have been foregrounded in digital culture discourse actually questions the material history of this manner of speaking about technology.1 With this in mind, I became more interested in the scientific framing of insects in relation to the idea of alien intelligence. A similar theme was picked up in popular culture in the nineteenth century in the US, as well as in later instances, such as the thought of the pre-WWII avant-garde, or cybernetics in the 1950s, which framed animals as communication systems. Let’s return to this topic soon.

Regarding the cybernetic of the 1950s, my primary case study concerns the dancing bees for which Karl von Frisch became famous: the “waggle dance” is the specific embodied form of communication that von Frisch claimed to have discovered.2 Gradually towards the end of the twentieth century there has been a growing interest within the arts in nonhuman perception and embodiment. This can be seen in the notion that alien intelligence is irreducible to the intelligence contained in beings with two legs, arms, and eyes. Software and robotics experiments learned gradually that any system that is able to adjust and learn from its environment is more effective than systems which you try to directly design as intelligent. It’s the environment which is smart and teaches the artificial system. Such a realization stemmed from some streams of cybernetics, such as Herbert Simon’s research in the 1960s, which aimed to show that an agent such as anant is only as intelligent as its environment.

These are some examples of insect media working across the material force of concepts and spanning technological and scientific practices. For over 150 years, many fields—from the sciences to the arts—have understood animals as part of modern media technological culture and have suggested ways in which animals and nature can be understood as conduits of communication.

My interest in viral culture—not merely viruses as objects, but contagion as a systematic feature of digital network environments—continued in another direction while studying this period that was so heavily influenced by cybernetics and information theory. I became interested in how the insectoid—swarms, distributed intelligence, the hive mind—finds its odd home in post-Fordist digital culture. It’s like nineteenth-century Victorian culture all over again. Instead of insect motifs in women’s hat fashion, digital rhetorics of insect intelligence ran through popular narratives.

I admit that such claims about insects and media culture sound metaphoric and all cyberculturey, but this is only before one starts reading and realizing that the arguments work against simplistic determinations and towards a media historical contextualization of how the biological and the technological are codetermining forces. It’s sort of an extended materiality in which technology turns into its other: nature, animals, the organic.

From viruses to insects, early artificial life research piggybacked on the scientific field, which mapped the mathematical and systematic qualities of animal worlds. Unlike some American dreams of meat-meeting-tech, I, like Friedrich Kittler, have always been less interested in the hyperbolic dimensions of such cybermetaphors, and more in the historical links that reveal the project of modernity as an extension of the ways in which power works through technology and knowledge. In other words, I’m referring here to the historical contexts in which knowledge about animals and ecology gets turned into discursive strategies for technological constructs. The metaphoric carries a much wider scientific framework, but it does not explain it. Nor are the biological metaphors reducible to linguistic determinations. This sort of historical work should remind us not to naturalize technological development even if technologies are so embedded in the natural.

PF: And how do we arrive at the point where we step back to look at geologies as media-before-media?

JP: After viruses in Digital Contagions, and after insects, I wanted to extend the excavation of the animal and ecological energies of media culture to the non-organic.3 This is where the new books, The Anthrobscene and A Geology of Media, fit in as a continuation of themes where media materiality extends outside media devices—for example, the minerals of computer technology that enable their existence as functioning technology in the first place.4

I remember a discussion I had with Steven Shaviro years ago, in which he actually suggested this to me before I realized how fitting it would be. He was talking about Whitehead’s ontology in which feeling happens also in the non-organic sphere, but it was one of the sparks that led to thinking about the media history of the earth. It’s the adaptation of the intelligence of non-organic life that determines so much of how accounts inspired by complexity theory have offered a “new materialism” of digital culture. But for me, it’s an ecological, even environmental reasoning that drives this link. The resources that are searched for, identified, and located by technological means in order to drive our technological development consist of rare earth and other kinds of materials that are simultaneously part of the earth’s durational history and part of the new media culture. They embody a media history of the earth, and also what will later become a sort of future fossil layer of technological waste. In other words, before and after media, we already have a significant amount of material things that are part and parcel of technological culture. Even dysfunctional technology merits its own place in the history of media—a history we are also writing in the future tense.

If you want one concrete object to illuminate this idea, think of the monstrous Cohen van Balen object H/AlCuTaAu (2014). Mined from existing technological objects, it’s a sort of reverse alchemy that brands the “magic” of technological culture in high-tech relation to the earth. The gold, copper, aluminum, tantalum, and wheatstone that make up the structure are not merely traces of technology. They also represent the persistence of the elemental across various transformations.5 Despite the merits of McLuhan’s proposal, then, media are less about extensions of man and more about transformations of the elements. Already Robert Smithson spoke of focusing on the elemental earth matter instead of technology as extensions of Man.6 In terms of the medium, this connection brings our topic close to land art—to Smithson and the contemporary variations of earthwork in the work of several artists. Among other people, I write a lot about Martin Howse’s work, including his joint projects with Jonathan Kemp and Ryan Jordan. Similarly, thinking about artists from Trevor Paglen to Jamie Allen and David Gauthier, Katie Paterson, and of course Garnet Hertz has made it easier for me to find an angle to address the geology of media because their work already engages with such topics and offers an aesthetic framework for these ontological questions about media.

These questions are a natural extension of the material drive of our aesthetic and media theory. You know this better than I do: it is what the Anglosphere often identifies as “German media theory,” in reference to Kittler and other thinkers who are interested in locating the materiality of cultural techniques in technological arrangements. But I want to insist that the materiality of media starts even before we talk about media: with the minerals, the energy, the affordances or affects that specific metallic arrangements enable for communication, transmission, conduction, projection, and so on. It is a geopolitical as well as a material question, but one where the geos is irreducible to an object of human political intention.

Besides, it’s good to avoid the obvious claims and conclusions. Media theory would become boring if it were merely about the digital or other preset determinations. There are too many “digital thought leaders” already. We need digital thought deserters, to poach an idea from Blixa Bargeld. In an interview, the Einstürzende Neubauten frontman voiced his preference for a different military term than “avant-garde” for his artistic activity: that of the deserter. He identifies not with the leader but rather with the partisan, “somebody in the woods who does something else and storms on the army at the moment they did not expect it.”7 Evacuate yourself from the obvious, by conceptual or historical means. Refuse prefabricated discussions, determinations into analogue or digital. Leave for the woods.

But don’t mistake that for a Luddite gesture. Instead, I remember the interview you did with Erich Hörl, where he called for a “neo-cybernetic underground”—one that “does not let itself be dictated by the meaning of the ecologic and of technology, neither by governments, nor by industries.”8 It’s a political call as much as an environmental-ecological one—a call that refers back to multiple (Guattarian) ecologies: not just the environment but the political, social, economic, psychic, social, and, indeed, media ecologies.

It’s this sort of cascade of a thousand tiny ecologies that I want to trigger with my work on viruses, insects, and also the non-organic geology and geophysics of media.

PF: In the current age of big data and swarm intelligence, technology looks increasingly to nature and the animal kingdom for inspiration. But you argue that this has been the case since the nineteenth century. Can you expand a little on the history of this—at first—surprising connection?

JP: Let’s think of it like this. When you start to look at how we talk about our technologies and also how they are designed, we are confronted with various expressions about nature—a fascination with nature, animals, and ecology as processes from which we can somehow learn. Despite promises of connection and economy or a culture of “human” sharing, networked media technologies are also described in terms that make us sound like insect colonies: distributed intelligence, swarms, hive mind, and so forth.

But as previously mentioned, this fascination with the insect was already part of a much earlier wave of enthusiasm for new technologies in the nineteenth century. This was the age of telegraphs, different audio-visual technologies, and a generalized expectation of the coming machine age built on the back of the first wave of industrialization. Constant parallels between nature’s perfection and the rationality of the machine already started to appear at the time. On the one hand, there was the idea that animals such as insects, with their multiple compound eyes, six legs, and “wireless” communication across wide distances, are like an alien life-form that mediates the world differently than earthbound creatures. You can find this notion in surprising places, such as entomology books. On the other hand, after Darwin but also continuing along with some earlier religious undertones, one finds the simultaneously occurring idea that nature is a perfection engine: a force that is always looking for an optimal solution to a problem. In architecture, this sort of relationship to the built environment persisted in the bridging of the “natural” and the “artificial.”

Nature as a mathematician—a problem solver—is an idea with earlier roots. It is constantly referenced in descriptions of natural processes in scientific and popular science literature. For example, insect colonies are often portrayed as perfection machines, i.e., models that have a lot to teach us about optimization algorithms.

PF: But hasn’t this turned out to be a misconception? Starting with early forays into the science of ecology in the nineteenth and twentieth centuries—think about people like Arthur Tansley—this idea of nature as self-regulating, harmonious, and always being able to find an equilibrium deeply ingrained itself into the systems theories of cybernetics up through The Limits of Growth (1972), while more recent studies have started to show that chaos, contingency, and change are much more significant and of course harder to simulate, predict, and deal with—on all levels. Doesn’t this change the post-cybernetic approach to earth as media entirely?

JP: It seems to depend on scale. Looking at how insect colonies optimize their movements feeds into probabilistic problem solving; it does not carry the weight of the illusions of a harmonious planet in homeostasis. It’s on the level of technique that such “naturalizations” are still seen as useful ways of processing data.

But applying the idea of a self-regulating system to the level of the planet is of course another thing altogether, and much more difficult. As you point out, it has become clear that we are dealing with such massive levels of interconnected patterns that it sets quite a difficult task for simulation techniques. It’s easier to simulate things when we know the agents and parameters involved. The more complex systems become, the more difficult it is to perceive and project the interactions, transactions, and intra-actions within. Computational power is one thing—useful both for financial institutions as well as artificial life research—but so is the careful work of selecting what we focus on in any simulation of a natural or economic process. Which variables are seen as important? What sort of agents are chosen as interacting, and in what ways? Based on what sort of data, collected where, and under what conditions do we mobilize projective calculations? What are the logistics and framing of the data according to which we want to perceive the planet as simulated?

Furthermore, some of the more naïve hypotheses of the self-regulating planet over the past century have always implicitly imagined the planet as something made for us: the underlying belief being that whatever we do to it, the planet will restore a suitable balance for us humans. If the planet is a self-regulating system, it does not necessarily mean that the time-scale is at all adjusted for the human species—Lynn Margulis already reminded us that “Gaia is a tough bitch” who works happily without any humans around.9

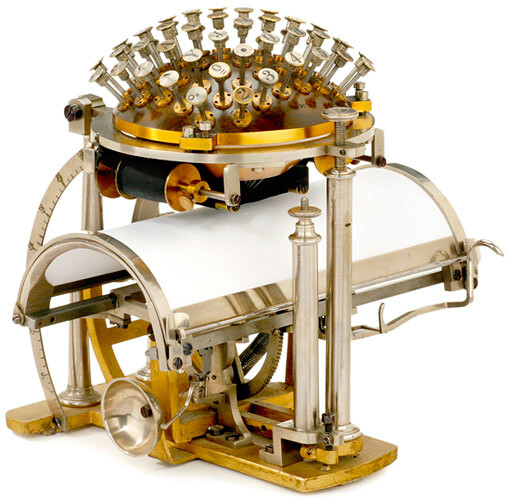

And the fantasy of homeostasis has not really disappeared from popular scientific discourse. It might have just shifted in order to be effective in other contexts. The use of feedback loops in health and wellness applications is such a big thing within the “quantified self” movement—a careful priming of the self that is however constitutive of a mix of environmental relations captured through an ever-increasing number of devices that enable us to perceive previously undiscovered patterns. Already in 1952, Ross Ashby introduced his Homeostat Machine in the Macy conferences on cybernetics, and today we are still in the midst of producing—and sometimes even fetishizing—cultural techniques of optimization.10

PF: Speaking of optimization, another recurring reference point is of course the brain—about which we still know very little. My favorite optimization procedure in AI and neural networks is called “Optimal Brain Damage,” which works by strategically pruning connections in a network in order to reduce redundancies.

JP: The ideal of a perfectly optimized brain—read: connected emergent transmission network of any kind—is constantly fantasized through its abilities to self-repair. The ideal brain can reroute around damaged areas. It learns. Flexibility and adaptation are the key words here, as shown in artificial life and AI research over the past few decades. From the original idea of intelligent machines, or representational AI, we have now moved to a focus on learning machines that are able to adapt to their environment and bootstrap the environment’s cues as part of its intelligence.

On a slightly different but not unrelated level, Catherine Malabou has been able to clearly identify the relationship between the brain and contemporary capitalism.11 Pasi Väliaho also picks up this connection in his recent book Biopolitical Screens, which highlights the military-scientific determinations of the neoliberal brain, which is presented as flexible even when prone to constant failure. Hence the importance of pedagogical drills—for example, the military recuperation programs that retrain traumatized soldiers.12

I became interested in this constant back-and-forth movement between the natural and the technological as a way of framing an alternative approach to technology. I started to look into how this theme of animality persists in a more ecological relation to technology. Ecology here does not necessarily mean “nature,” but more accurately, the wider set of relations in which technology is understood as a historical and material conditioning of everyday life.

By examining insects, animals, and so on in this media archaeology of the animal and the technological, I was able to locate some very odd and inspiring insights into media, art, and technology. That brain you mention—we need to constantly remind ourselves that it’s not a model of the necessarily human brain. The brain becomes a more general cybernetic model too. It’s not merely the human that is modeled here. Design solutions are also picked up. Besides research into bees and their embodied forms of communication, think of, for example, British cybernetics and W. Grey Walter’s cybernetic turtles, or scientific research with monkeys and dolphins in the US,13 which was of interest to the US Navy.

It is not merely about insects of course; think, for example, of early robotics designed to be embodied and self-reflective of their surroundings. In a way this meant bootstrapping a sort of “tiny intelligence” as part of the robots’ world-relations.

PF: How does the study of swarms of birds or fish, ant colonies, the analysis of ocean currents, or the creation of artificial life-forms help us create better models for collective agency and organization?

JP: In British cybernetics, William Grey Walter’s work on a robotic tortoise in the 1950s was a good example of how to think, design, and plan in a non-anthropocentric way. In more ways than one, a lot of the early work of contemporary society on smart agents that are responsive to their environments was set in post-WWII cybernetics; British and American scholars in cybernetics and information theory are the forefathers of the contemporary posthuman swarm-world.

Swarms are, of course, a key concept in terms of the insect media approach. The focus on swarms is a curious move, from nature documentaries about fish flocks to computer animation techniques that partially automate agent movements. One key feature of the recent enthusiasm for using “swarming” to describe emergent forms of organization is that it’s no longer necessary to design a central intelligence; instead, one can build reflective, interactive, and developing systems that bootstrap “intelligence” into their behavior.

In other words, the beauty of a bird flock that seems to move with a mind of its own is the perfect visual conceptualization for an era that thinks in terms of emergent systems. But let’s not be mistaken. This was already the case in the early twentieth century, when certain pioneers in entomology described the powerful, almost magical nature of this kind of organization. Some popular fiction writers at that time were amazed at how insect colonies acted like an organism composed of multiple distributed agents. William Morton Wheeler, for example, took a scientific approach to self-organizing systems and the “meta-intelligence” exhibited by the colony, which was often perceived like a machine. But that sort of a machine did not resemble the clunky steam and mechanical tools that characterized industrialization. Instead, Wheeler was already thinking of models that have become more prevalent in our so-called postindustrial age of intelligent machines—intelligent because they can adapt and learn. They are collective machines that synchronize according to the group and also the environment.

This also stands at the emergence of important traits of computer graphics and visual culture. “I would like to thank flocks, herds, and schools for existing: nature is the ultimate source of inspiration for computer graphics and animation,”14 pronounced Craig Reynolds, a pioneer in artificial life and computer graphics. He said this in the mid-1980s to mark his “invention” of boids, these little figures of procedural graphics that moved from experiments in collective behavior to Hollywood films and the wider visual aesthetics of digital culture. In network science, the likes of Eric Bonabeau spoke of the design information gathered from social insects, pointing out that things like errors are not merely a thing to get rid of, but an instrumental part of the self-organization of a system that is finding and mapping the best ways to explore an environment.15

This is the insect lesson: the difficulty of building intelligent systems is replaced by the idea that you can instead focus on building enough small subsystems so that, by interacting with each other, they are able to create intelligent systemic behavior on their own. Swarms then spread from technological discourse to describe many other things, and now they are indeed at the core of how we think about social behavior and even the economy; crowdsourcing is one such logic that relies on the existence of a network; the hive mind is a related conceptualization. Many other similar themes offer variations on how entomological themes penetrate our postindustrial capitalist society. We don’t need to think of this as biomimesis, as imitating nature; it’s more of an embodied relation of gathering information about the relations that constitute specific informational and embodied patterns, and using those as design principles.16

PF: In both technology and society, there is a constant back and forth between centralization and hierarchization on one hand, and distribution, decentralization, and nonhierarchization on the other. So how can metaphors of the animal world—especially when we think about networks—be used to think about connectivity in new ways?

JP: All of this gets really interesting, and really problematic, when we start talking about the “society of connectivity” through concepts related to nature. This is an old critique but still valid: using terms that are natural and naturalizing to describe complex social and economic relations in capitalist society is a perfectly tuned ideological operation. Critics of capitalism, such as Benjamin, made this critique in their own creative ways, by recognizing the back-and-forth movements of history and nature. This was part of the Frankfurt School agenda.

The same thing happens through historical retrojections: look, for example, at the number of stories that are written about the “first” selfie or ancient “social media” when some new archaeological discovery is made. It’s perfect material for a pseudomedia archaeological search for the roots of phenomena that are media-specific and part of the postindustrial mode of capitalist operation. In terms of nature and animals, the connection between artificial life and capitalism is deeply embedded in much more than linguistic naturalization and metaphors. One can even say that this sort of discourse is the new version of Adam Smith’s invisible hand. In this case, that means an interest in the semi-autonomous operations of software agents—for example, in financial trading. Since the 1980s, banks and other capitalist institutions have shown a growing interest in artificial life research, something I touched on in Digital Contagions.

But there is more than ideology at work here. The “swarm” is not merely a quirky metaphor adopted from biological discourse. Increasingly, swarms form our infrastructure, and are intelligent agents that act as proxies for our social actions, desires, and moods. The swarm is behind everything, from the banal to the cruel, from the networked smart house to the military-technology complex. The swarm is an infrastructural constitution of relations of sensing, data processing, and feedback structures, and it increasingly constitutes what we as so-called humans are able to perceive.

PF: How does all of this apply to the notion of the “cloud”? I am concerned that this metaphor of the ephemeral and celestial, puffy and angelic, conceals—in a rather smoke-and-mirrors way—the massive campaign of data centralization that it actually encompasses.

JP: I am tempted to say that it is as simple as this: the shop window is the cloud, and behind it is the brand—the massive, planetary-level political economy of infrastructural arrangements. It’s in this sense that the Snowden leaks are as much about the wrongdoings of the NSA and the GCHQ as they are about the software and hardware that allow data to flow and be intercepted. It’s not merely about the specific techniques developed for interception, but about the whole arrangement in which data is stored, processed, and transmitted in ways that follow geopolitical preferences.

One can also realize this through such discussions as the “smart city,” which is a similar operation and should be discussed in terms of the materiality of its infrastructure and the political economy that puts it into motion. Of course, this infrastructure might be partly cloud-determined; control structures for traffic, security, and shopping are processes not on the level of the street, but on the level the cloud. In practice, this can range from driverless cars to preemptive automated security decisions made based on projective risk calculations. But as suggested above, instead of thinking of this setup in terms of ideology, consider it in terms of a desire that is infrastructural and that channels our actions, perceptions, and potentials. This is the model that Deleuze and Guattari propose, and it works well in this context too; sites of storage, archives, and processing power that are connected to the sensors, interfaces, and so forth are where reality is being modulated. We should not get too stuck in a representational analysis of the terms, which of course might be interesting too. Instead, we should be able to track how desire is invested in infrastructure and material assemblages, and how we can conceptualize it accordingly.

PF: (When) will the engineer disappear and technology become evolutionary?

JP: The most interesting media theory work of the past few decades—such as Kittler’s—has tried to think through this question in terms of self-writing. When machines are able to write, reproduce, and design themselves, they pick up on characteristics that are more than what is being engineered into them. In 1961, the British science fiction author Arthur C. Clarke suggested that “any sufficiently advanced technology is indistinguishable from magic.”17 Sure, but perhaps we could now rephrase that to say that “any sufficiently advanced technology is indistinguishable from nature,” not merely because it is “inspired” by natural processes, but also because it disappears into its surroundings.18

It’s not merely about the complexity. What I’m interested in is a different sort of a relation, one of material production. One can write an archaeology and a cartography of media technologies from the point of view of their materials—the gutta-percha used for insulation, the chemistry of visual media, the mineral basis of computationality. Lewis Mumford was among the first to hint at how to do that.19 He spoke of the paleotechnical era, which was dependent entirely on the mining of coal, and the following modern technical eras that discovered modern and synthetic materials as well as new energy economies. These are the genealogical traits of a material history of media that begins with the material and its modes of organization rather than with the engineer.

Following Manuel De Landa’s thought experiment, the future robot (media) historian won’t be interested in the engineer, for example, but rather in the processes of organization, self-organization, and emergence of material components.20 And, I would add, this robot historian will be interested in the affordances and logistical chains that ensure the availability of the material components that sustain what we think of as media and technology. The robot will most certainly have a more efficient system of dealing with electronic waste, too.

PF: So the engineer or designer becomes the material of media …

JP: Let’s turn it upside down, indeed. The engineer does not breathe life into inert material. With their specific qualities and intensities, the materials demand a specific type of specialist or a specific method to be born, so that they might be catalyzed into the machines we call media. The material invents the engineer.

Media emerges with a relation to the earth and the planet, both through synchronization with natural processes perceived to be efficient—such as swarms—and through a systematic knowledge of what materials should be extracted to build such artificial machines; minerals, fossil fuels, and rare archaic elements dating back millions of years sustain the fact that we have high-tech media.

PF: There is something uncanny in the “otherness” of insects. We all know the saying that cockroaches and ants will long outlive us after whatever kind of apocalypse might come. How can we approach this posthuman discourse and the idea of non-anthropomorphic intelligence?

JP: This is the other pole of media materiality—not the earth from which media is composed, but what will remain after the technological. It is also the other end of screen materiality, as Sean Cubitt has long encouraged us to focus on: the hardware of the screens as a regime of aesthetics that falls under the theme of ecomedia. This is not an object of “ecoaesthetics” as a separate art work so much as it is the conditioning of the connection between the technological and its environmental baggage.

I write about fossils and their imagined futures in the forthcoming book, A Geology of Media, by addressing the idea of future landscapes of waste that will be the synthetic remainders of our scientific-technological culture. I move the focus from synthetic intelligence to synthetic rubbish. But in terms of the posthuman, the question is complex. In a recent interview, Rosi Braidotti nailed it when referring to Katherine N. Hayles.21 Perhaps, she argues, we should be less concerned with the question of non-anthropomorphism than with non-anthropocentrism. Echoing Braidotti, some recent philosophy seems to have finally discovered non-anthropocentrism as a necessary perspective. But the insistence on abandoning anthropomorphism is rather difficult. We cannot just adapt a position of “nowhere”—of imagined object worlds—and a phenomenology or even an ontology of that sort of enterprise without having something to say about epistemology.

This marks a departure from some proponents of speculative realism. I am not that interested in getting involved in the current philosophical discourse that seems a bit removed from my concerns in materiality, art, technology, and historical conditions of issues that are quite pressing, not least the climate disaster. I am interested in the longer roots of the kind of non-anthropocentric thinking that still attaches to a wide range of determinations relevant to media history and media archaeology. For me, the philosophical question of nonhuman intelligence is one that we can address through media history: the various phases in which cultural techniques shift from humans to machines, and in which complex feedback loops and informational patterns redefine notions of intelligence. Alien intelligence also comes in many forms and has arrived many times already in the form of everything from bacteria to technological constructions.

PF: What, in your opinion, will be the (near) future of drones, (nano)bots, and cyborgs? Will all that remains be just posthuman wastelands of nanotechnological life-forms that fuse with the resilient insect populations of the future earth?

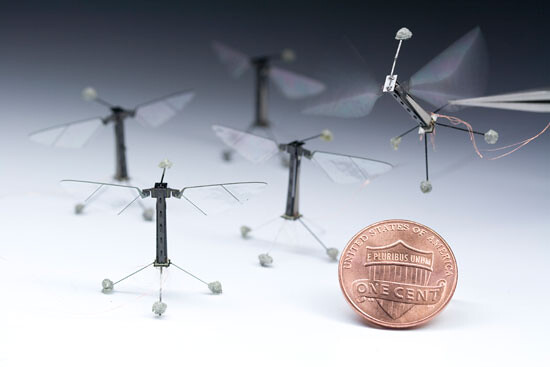

JP: As in the skies, so in the networks. That’s as biblical as I can get. But more seriously, the multiplication of distributed agents connected to the military and corporations defines the way in which security and entertainment media worlds create a swarming near-future scenario, often envisioned either through the military possibilities of massively distributed robotics—from Grey Walter’s robotic tortoise to the robotic “bee swarms”—or as the future of the service economy.

Swarms are really good at synchronizing, or as German media theorist Sebastian Vehlken has convincingly demonstrated, they are indeed synchronization machines.22 They create collective behavior from simple elements, but they also have the ability to synchronize with their environment. This is where the flocks of anchovies in the waves and birds in the air become useful for understanding the smart environment. So much of what we put into our artificial distributed intelligence machines is predicated on knowledge about nature gathered for the past one hundred years or so. The natural is folded in as part of the social and the technological, including military security applications.

Parts of Snowden’s recent statements or “leaks” include mentions of the MonsterMind software swarm, which is designed to detect cyber attacks against the US as well as engage in preventive counterattacks. It’s a struggle on the infrastructural and logistical level that characterizes these sort of situations where the target does not merely come in human form. This is one form of the swarm-service future, with the distributed “proxies” of surveillance, sensors, and military operations offered as software or robotics.

PF: Which means that technology’s level of autonomy and autonomous nonhuman agency is rising. What if we thought about this not in terms of warfare, but in terms of ecological evolution? When swarms of networked nanobots migrate and flock to the Global South to mine rare earth minerals for themselves …

JP: It’s still a continuation of the security industries that are part and parcel of the protection of the resourcing, logistics, and accumulation of materials. The various military/defense equipment manufacturers are constantly looking for new markets, which also means that domestic security in many countries will see an increase in drones as the proxies of intelligent law enforcement. Drones are at the forefront of technological and legal battles over new forms of enforcing borders that are not merely national limits, but rather a variety of protected zones based on different security concerns, economic interests, and so on. They also create new cultural practices and subcultures such as those around DIY drone design.

Why would you have to invent apocalyptic future scenarios when all you have to do is write a descriptive account of the current moment? It was Sean Cubitt again who nailed it: the hostile cyborg-entity that’s out to get us is not sent from the future in the form of a killer robot, but rather exists now as the distributed “intelligence” of corporations that feed on the natural resources of the planet and the living energies of humans. What are the institutional ties of drones—also in terms of their data relations? Where does the feed go, whose drones are they, and how is data gathered with sensors institutionalized and set into action?

The legacy of 1990s cyberculture should not be about idealizations of a new territory that is completely removed from nation-states. Remember the declaration of independence of cyberspace? Well, the supposed secession is more accurately a new layer of governance that cuts across the borders and layers of corporations and supranational bodies. It constitutes a reproduction and variation of forms of power, privilege, and security that works through producing knowledge, but also by means of brute force. Benjamin Bratton is the leading analyst of the new nomos that divides the earth and the seas, the clouds and the underground. The new technologies of self-organization, such as swarms, drones, smart infrastructures, and so on, are employed in relation to the wider geopolitical agencies of the military-cryptological industries, and the border security of nation-states and privileged private spheres.

The novels on singularity that I find to be crucial markers of the emergence of the computational, digital culture of the 1980s and 1990s—from Vernor Vinge to Ray Kurzweil, from Erkki Kurenniemi to the critical accounts of Charles Stross—are embedded in the corporate work of Google. Kurzweil’s day job in natural language processing is still geared toward his vision of 2029, when computers “close the gap” and reach humanoid capacities of “being funny, getting the joke, being sexy, being loving, understanding human emotion.”23 It’s a perfect narrative for Wired, but it misses the point: it’s not a given that humans get the joke, or are sexy, or loved. But this bootstrapping of the affective into the systematic search-engine-turned-computational-infrastructure of what used to be “cognitive capitalism” fuels this whole massive operation.

Florian Cramer is right to suggest that these supposedly technohumanist (corporate) fantasies are actually dystopian—including “Kurzweil’s and Google’s Singularity University, the Quantified Self movement, and sensor-controlled ‘Smart Cities.’”24 Hence the postdigital should not become a mourning ground for an apocalypse to come, but rather a more politically oriented historical analytics, programmatics, and ethics—an idea inspired by Braidotti. The nanotechnical and such are not to be projected as part of a future, but rather as an articulation of the technical media reality now, including everything from corporate cybogs to swarm-agency. Any conceptualizations of the “post” are not in this sense futuristic, but in the best case can produce a sense of the present as a temporal multiciplity worthy of our times. Again, I am echoing Braidotti in a feminist ventriloquist style.

See Craig Reynolds, “Flocks, Herds, and Schools: A Distributed Behavioral Model,” Computer Graphics vol. 21, no. 4 (July 1987).

Karl von Frisch, The Dancing Bees: An Account of the Life and Senses of the Honey Bee, trans. Dora Ilse (London: Country Book Club, 1955).

Jussi Parikka, Digital Contagions: A Media Archaeology of Computer Viruses (New York: Peter Lang, 2007).

Jussi Parikka, The Anthrobscene (Minneapolis: University of Minnesota Press, 2014); Jussi Parikka, A Geology of Media (Minneapolis: University of Minnesota Press, 2015).

See the project page online →.

Robert Smithson, “A Sedimentation of the Mind: Earth Projects” (1968), in Robert Smithson: The Collected Writings, ed. Jack Flam (Berkeley: University of California Press, 1996), 101.

Susanne Geuze, “I am not avant-garde; I am a deserter: an interview with Blixa Brgeld,” Volonté Générale 4 (2014) →.

Erich Hörl and Paul Feigelfeld in conversation →. Originally published in Modern Weekly.

Lynn Margulis in John Brockman, The Third Culture: Beyond the Scientific Revolution (New York: Simon & Schuster, 1995). The conversation can be found online at →.

See John Johnston, The Allure of Machinic Life: Cybernetics, Artificial Life, and the New AI (Cambridge, MA: MIT Press, 2008), 40–47.

Catherine Malabou, What Should We Do With Our Brain?, trans. Sebastian Rand (New York: Fordham University Press, 2009).

Pasi Väliaho, Biopolitical Screens: Image, Power, and the Neoliberal Brain (Cambridge, MA: MIT Press, 2014).

See John Shiga, “Of Other Networks: Closed World and Green-World Networks in the Work of John C. Lilly,” Amodern 2 (2013) →.

Craig Reynolds, “Flocks, Herds, and Schools: A Distributed Behavioral Model,” Computer Graphics vol. 21, no. 4 (July 1987): 25-34.

Derrick Story, “Swarm Intelligence: An Interview with Eric Bonabeau,” openp2p.com, Feb. 21, 2003 →.

A point made also by John Johnston, The Allure of Machinic Life, 52.

The phrase is the third of the often-quoted “Clarke’s Three Laws.” This one is introduced in the later version of Clarke’s story “Hazards of Prophecy: The Failure of Imagination” (1973).

The science fiction author Karl Schroeder has also used the same phrasing in the context of the problematics of (detecting) alien intelligence. See Karl Schroeder, “The Deepening Paradox,” November 2011 →.

Lewis Mumford, Technics and Civilization (Chicago: University of Chicago Press, (1934) 2010).

Manuel Delanda, War in the Age of Intelligent Machines (New York: Swerve, 1991).

Rosi Braidotti and Timotheus Vermeulen, “Borrowed Energy,” Frieze 165 (September 2014) →.

Sebastian Vehlken, Zootechnologien. Eine Mediengeschichte der Swarmforschung (Zürich: Diaphanes, 2012).

Steven Levy, “How Ray Kurzweil Will Help Google Make the Ultimate AI Brain,” Wired, April 25, 2013 →. The Winchester School of Art is currently part of a consortium with University of California San Diego and Parsons School of Design where issues of artificial intelligence, synthetic intelligence, and remote sensing are addressed as part of our collective research work.

Florian Cramer, “What is Post-Digital?” APRJA 3.1. (2014) →.