The Battle of Ilovaisk

Forensic Architecture2018

NaN Minutes

Artist Cinemas

Week #3

Date

July 1–7, 2020

Join us on e-flux Video & Film for an online screening of Forensic Architecture’s The Battle of Ilovaisk: Verifying Russian Military Presence in Ukraine (2018), on view from Wednesday, July 1 through Tuesday, July 7, 2020.

Currently, there’s no shortage of cinematic engagements with machine learning. Quite a few of them focus on the subjugation of humans by intelligent machines, while others try to imagine ways for machines to create films with minimum (or no) participation of humans. Forensic Architecture’s The Battle of Ilovaisk goes against the grain of both of these tendencies.

For this project, a machine learning tool was developed to make sense of a vast mess of moving images and other pieces of information created by humans in the course of one of the most violent episodes in the recent history of Europe: the covert invasion of Eastern Ukraine by the Russian regular army in August 2014. The results returned by the machine were scrutinized by human researchers who examined evidence of war crimes committed by the Russian military on Ukraine’s territory, made apparent by cross-referencing the available data. The bias inherent within the mechanisms of machine learning were also examined.

This eight-minute video by Forensic Architecture selected for the War and Cinema program is a documentary piece that ventures both into the realms of science and politics (it’s part of the body of evidence submitted to the European Court of Human Rights in the form of an interactive platform). While the video is presented here as a self-sufficient piece of filmmaking, the viewer is also invited to explore Forensic Architecture’s Ilovaisk platform in-depth, on which the information collected and examined by machine and human researchers is placed on a time map and made available for interactive viewing.

Delving into FA’s Ilovaisk platform opens up a universe of primary sources that can be viewed as pieces of spontaneous filmmaking themselves. Anonymous videos of Russian military vehicles recorded by locals in Eastern Ukraine, filmed interrogations of captured Russian soldiers, and bits of footage created by war reporters are, primarily, of evidentiary value. However, for the attentive viewer they also reveal a great deal of psychological and social detail that prove them to be indispensable micro-historical documents of a proxy war that Russia is waging in Ukraine, and, by extension, elsewhere.

The Battle of Ilovaisk is presented here alongside an interview with Forensic Architecture’s Nicholas Zembashi by Oleksiy Radynski. The film and interview are the third installment of War and Cinema, a program of films, video works, and interviews convened by Radynski, and comprising the second cycle of Artist Cinemas, a long-term, online series of film programs curated by artists for e-flux Video & Film.

War and Cinema will run for six weeks from June 17 through July 29, 2020, with each film running for one week and featuring an interview with the filmmaker(s) by Radynski and other invited guests.

Nicholas Zembashi in conversation with Oleksiy Radynski

Oleksiy Radynski (OR):

In The Battle of Ilovaisk, machine learning was used to sift through around 2,500 hours of online videos that might contain evidence of war crimes committed by the Russian Federation in the territory of Ukraine. How were these 2,500 hours sourced in the first place?

Nicholas Zembashi (NZ):

The Ilovaisk platform was the first project that we’ve developed where research is coupled with machine learning as a tool for human rights investigations and open-source research. The tool that we’ve developed uses existing APIs from YouTube to source videos, and it customizes them in order to filter the videos down to certain research terms, say, location names, or specific dates of the battles in the summer of 2014 in Ukraine. Then this information is returned as video clips that are related or relevant to the search terms, more specific than you can get by searching on YouTube. This is what we call the media triage—it’s the tool that we developed. The tool is now open-source. It triages the sets of videos on those research terms, and then it triggers a download process to grab all of those videos and break them down into frames. Essentially it prepares those videos for a machine learning classifier, which is the tool that uses machine vision to determine what parts of an image contain the object that we’re interested in. This tool also allows us to choose how frequently it takes a frame out of the video—is it every frame, or ten frames out of 2,000? Sometimes it’s quite a laborious process even for the machine to work through, let alone the researcher.

Then we use an adapted pre-trained classifier, trained to recognize certain generic labels using a database known across the machine learning community as ImageNet. It now already has 10,000 groups of classified terms, which human researchers went through and classified—what is a tank, what is an assault rifle, what is a balloon, what is ahouse, etc.—and all of that was used to train that classifier. We took the terms from these pre-trained classifiers that were useful to our case, which were more focused on military vehicles and military weapons, drawing on something that was already out there in the open-source community. And at that degree, we allow the machine to go through all of these frames of video, and return results in the way that you see in our video as highlights of degrees of certainty, where in those videos it recognizes those specific terms: tank, soldier, uniform. So then researchers can go through all of those videos and verify them.

We never use the machine as something that simply returns information which we can treat as somehow correct by default. There is always a step of fact-checking all the way. So the researcher is always working alongside the machine. The machine is also a researcher in itself, but we acknowledge it has biases, it has issues, it might return wrong results or results that don’t make sense. So the researcher goes through all the video frames that the machine returns, and they check if there’s anything of interest. All of those videos were referenced, sourced, verified, and then visualized into a virtual interactive platform made by a tool we call “time map” that allows all the open-source data to be visualized as points of information. We’ve grouped hundreds of hours of videos into around 150 events, and all of those events contain either videos, or reports, or translated documents and testimonies, so the evidence can be viewed for the first time on this mass scale, where both geography, time, reports, testimonies, images, videos, and every piece of evidence can be cross-verified. That’s part of the process of gathering new evidence that the legal teams that had commissioned us might not have been aware of.

OR:

What’s the watershed moment for open-source investigation in the case of the Ilovaisk platform?

NZ:

We now have a media landscape that’s never existed before, and we’re inundated with information. There’s so much information that it’s easy to either get lost in it, or use it to manufacture doubt. This plays into the hands of people that want to challenge legitimacy. The watershed moment in this case—and in cases that follow—is that it opens a path in using tools like machine learning that try to automate part of a researcher’s job in sifting through the information that exists online and pinpointing things much faster. It would be impossible to have a realistic timeframe for going through thousands of hours of videos, even if you had a factory of people just mining the web for information. Now, machine learning can be appropriated as a tool, in the spirit of what we do as Forensic Architecture. That is, we use the tools that are already being used by state or corporate agents, and we question those tools. We pick them apart and try to use them in a counter-forensic manner, in a way that challenges their use by state or corporate agents. That was the reason we kickstarted this research with Ilovaisk. It was a project about an overabundance of information, and that was the challenge.

OR:

The Ilovaisk platform is a really elaborate, in-depth investigation into something that’s been well-known for very a long time. The fact that Russia covertly invaded Ukraine with its regular forces in August of 2014 was established almost immediately and quite well-documented. Still, none of that made it much harder for Russia to deny the invasion.

NZ:

Yes, it’s already common knowledge—there is a common statement, it is apparent, and it’s being fought for legally as well. And that’s something that the legal teams that commissioned us are trying to prove. What we’ve produced is a very analytical piece that can back up that claim, without making that claim ourselves—for we as Forensic Architecture don’t make claims in the legal setting. What we discovered and what we can say is that there is overwhelming evidence, like we all know and like it has been known, of Russian presence in different forms. It was easy before to discount these claims on the basis that either there’s too much information, or it’s too sparse, or broken up. Through the platform, and through the process of cross-verification, we’re able to show that there are way more links, and way more narrative threads between the information than is immediately apparent. And it is all there: the research is sourced and linked, it’s all online. For anyone who wants to claim otherwise—they can try and challenge it. We’re not fabricating, we’re only cross-referencing what’s already known.

OR:

How did you select the four particular cases to be represented through short films on the Ilovaisk platform?

NZ:

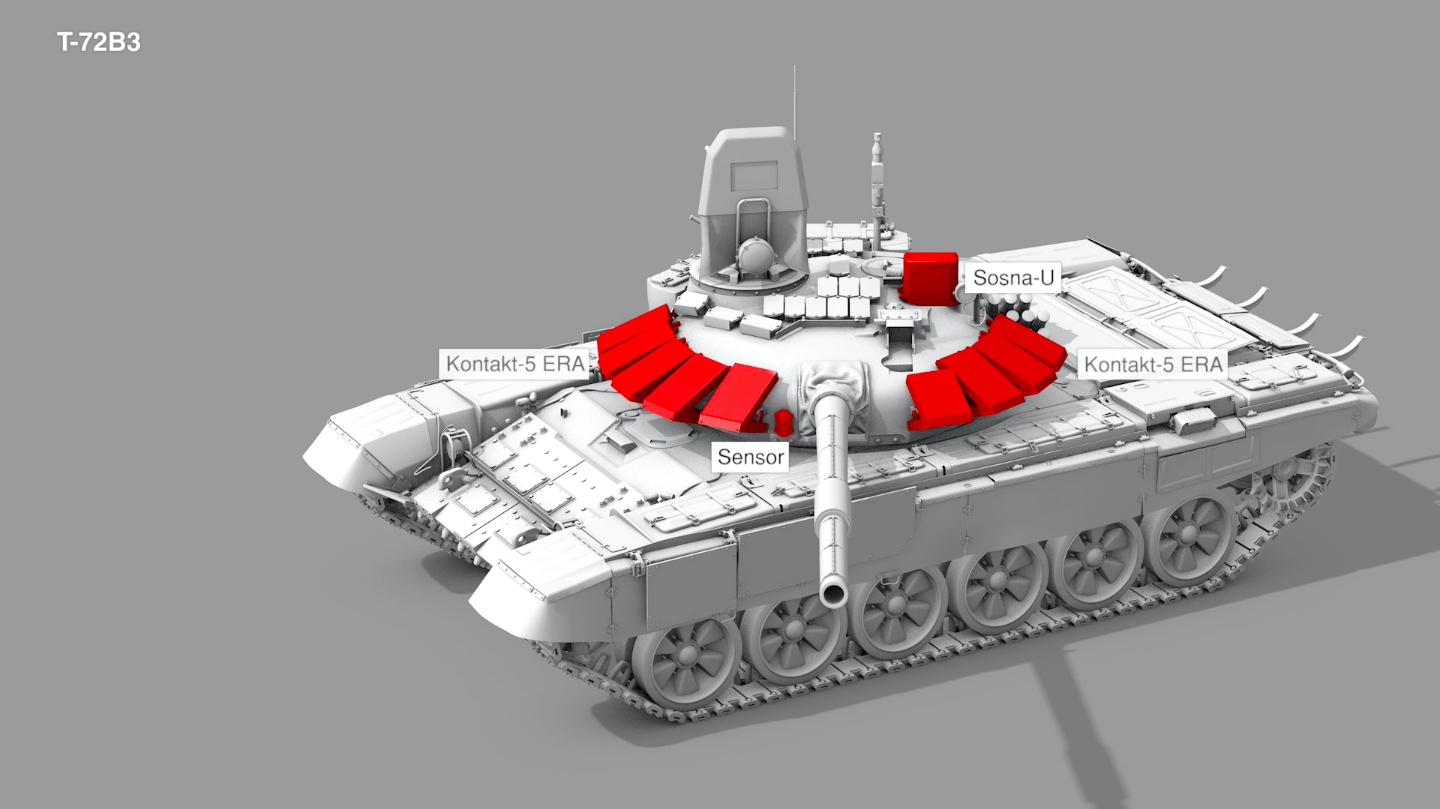

Those videos were made as specific instances where we can demonstrate the strongest evidence of Russian military presence. Like the appearance of a specific tank model, T72B3, which was uniquely deployed by the Russian military at the time. It was also important that we discovered that same tank in several pieces of information that were never linked before. That was a unique find. Or, the geolocation of a specific house in Chervonosilske in a video which features the captured Russian soldiers. This was unique as it was pinpointed both through the geolocation process and through architecture features analysis.

OR:

We know that Russia is very aggressive in its retaliation against attempts to expose its covert military presence in Ukraine and elsewhere. Did Forensic Architecture or you personally experience this?

NZ:

Not to a degree that was concerning, but there’s always a threat. We are a research agency that is part of a university, and what we do is part of an academic setting. In terms of security, we always take precautions, but so far we haven’t had troubles on a significant degree. We have very vocal reactions in the media scape—especially when it comes to our work on Syria—from the pro-Russian elements. But so much of the information we gather and use in our research exists in the open-source community anyway and is already known. We don’t produce anything, we only make links through cross-verifying footage and images that exist online anyway. So any kind of challenge is not to us as an authority—because we’re not an authority, we’re a research agency—it’s a challenge to information and inference. With some projects that we’ve had in Syria, Assad himself responded by calling our piece “fake news.” And the Israeli government also thinks we’re fake news.

OR:

Let’s expect a statement from Putin claiming your Ilovaisk platform is fake news.

NZ:

Part of our mandate is to speak truth to power where we can. When they respond, we know that they’re listening.

OR:

In your individual practice, you’re working with machine learning to reveal discriminatory bias. How does this relate to your work within Forensic Architecture?

NZ:

I use machine learning as part of my research and within Forensic Architecture to investigate what is called the bounding boxes of meaning. For instance, how are the boundaries determined when machine vision is used to say what part of the territory of pixels that is an image is determined as one thing or another? It’s kind of a meta-investigation of the tool and the method itself.

My own research is looking at how in our architecture there are also physical manifestations of territories or boundaries which designate where something belongs and something doesn’t. Not only geographically when we have borders and roads and lines on a map, but also with the physical walls, and with the symbols and ornaments that we use, or the information that’s encoded on our walls. In Forensic Architecture’s perspective, it’s down to the evidence that’s left behind on the architecture itself that allows us to determine: this means this. And that’s what I define as edge—essentially, a conversation around how we generate meaning, how we draw that line, that bounding box. And it’s a conversation around the blurring of that edge in machine learning, which is a contemporary technology that confirms biases that existed forever, essentially, around how we draw meaning around things. But through this technology, it’s faster and more abstract.

This presents a much more threatening danger—the way that we glorify the logic that exists around computing as something that necessarily stands as objective. What we do at Forensic Architecture highlights that as being problematic. We not only find use-cases in which to develop tools that contribute to human rights cases, we also do this as a way to engage with and interrogate the tool itself. Machine learning is the tool that creates correlations between the thousands of pixels in an image by assigning values to them—zeroes and ones—based on contrast and darkness and the relation that pixels have between them. But a computer doesn’t understand what a tank is. I need to tell it: here’s ten thousand images, and I need to draw a bounding box or an edge around where the tank is. What happens then—a human needs to decide where the tank is. For a machine to start deciding what means Ukrainian, what means Russian, what a tank is, what a Russian tank is, a human first needs to assign these meanings to the dataset it is being fed—that is why we always have bias. And then the machine learns from bias, and the results of the machine are never objective. It is important then to add mitigating measures to account for these biases especially if the classifier being used is a pre-trained one—that is, a classifier resulting from training on datasets that we ourselves did not gather or label with various meanings. Hence it is crucial in our use-case of the tool that the results are always run by a human researcher.

Through the Ilovaisk project we were also trying to investigate ways of discovering those biases in a whole lot of topics. Take for instance synthetic data, which, in summary, is generating images and datasets ourselves through hyper-realistic renders, as a way of supplementing the database of real images that we don’t have. At the same time, what these renders allow us to investigate is to introspect the tool itself. By generating the image, we’re able to control the lighting, the graininess, etc. We turn them on and off, we see how this affects the machine, and we’re allowed to question how the machine also inherits biases from the ways in which the image was captured. It opens up a whole other avenue about what an object even is in an image, as a more abstract conversation. Where does the edge of a tank end on an image? What does that mean? It’s a conversation about the dangers of biases in these tools and how we can mitigate them when we use them. All of these things are part of what we do. That is, investigate the tool itself.

For more information, contact program@e-flux.com.