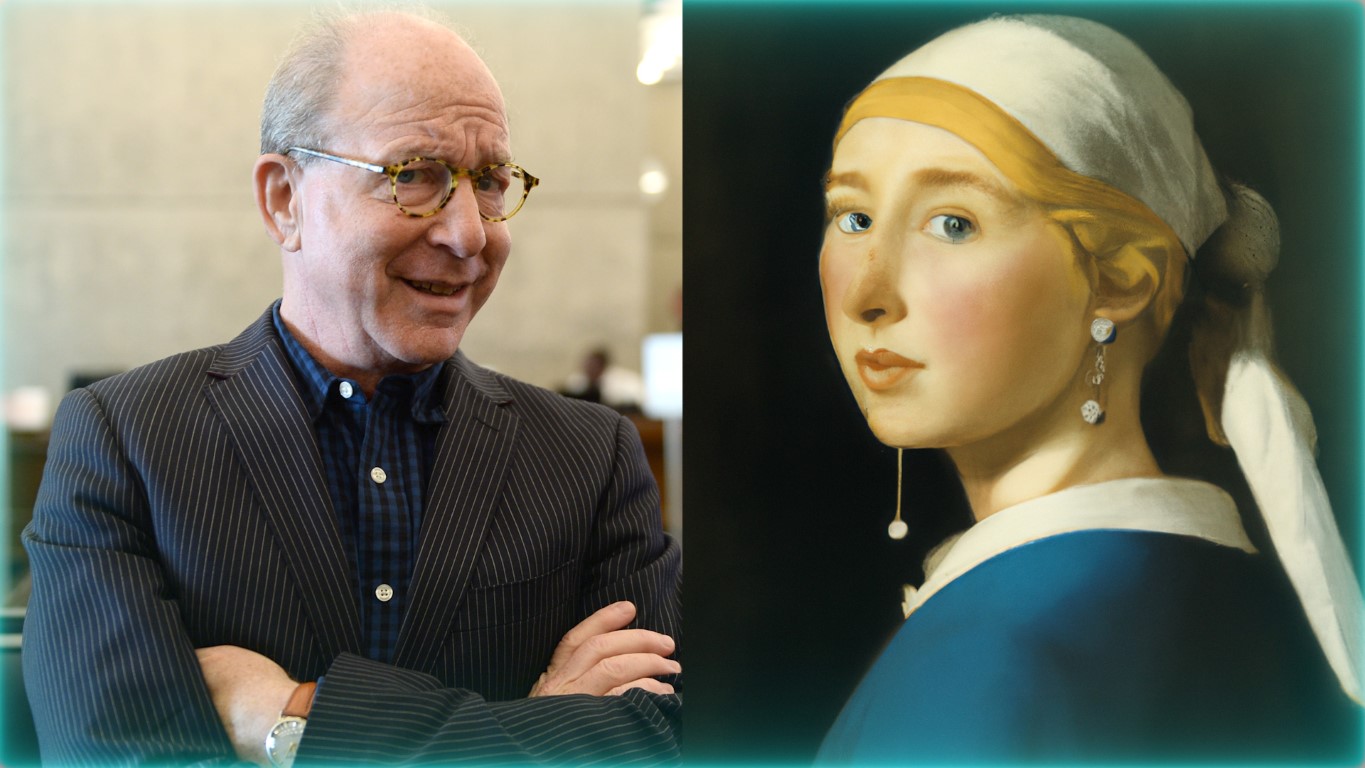

Jerry Saltz commenting on DALL-E for CNN.

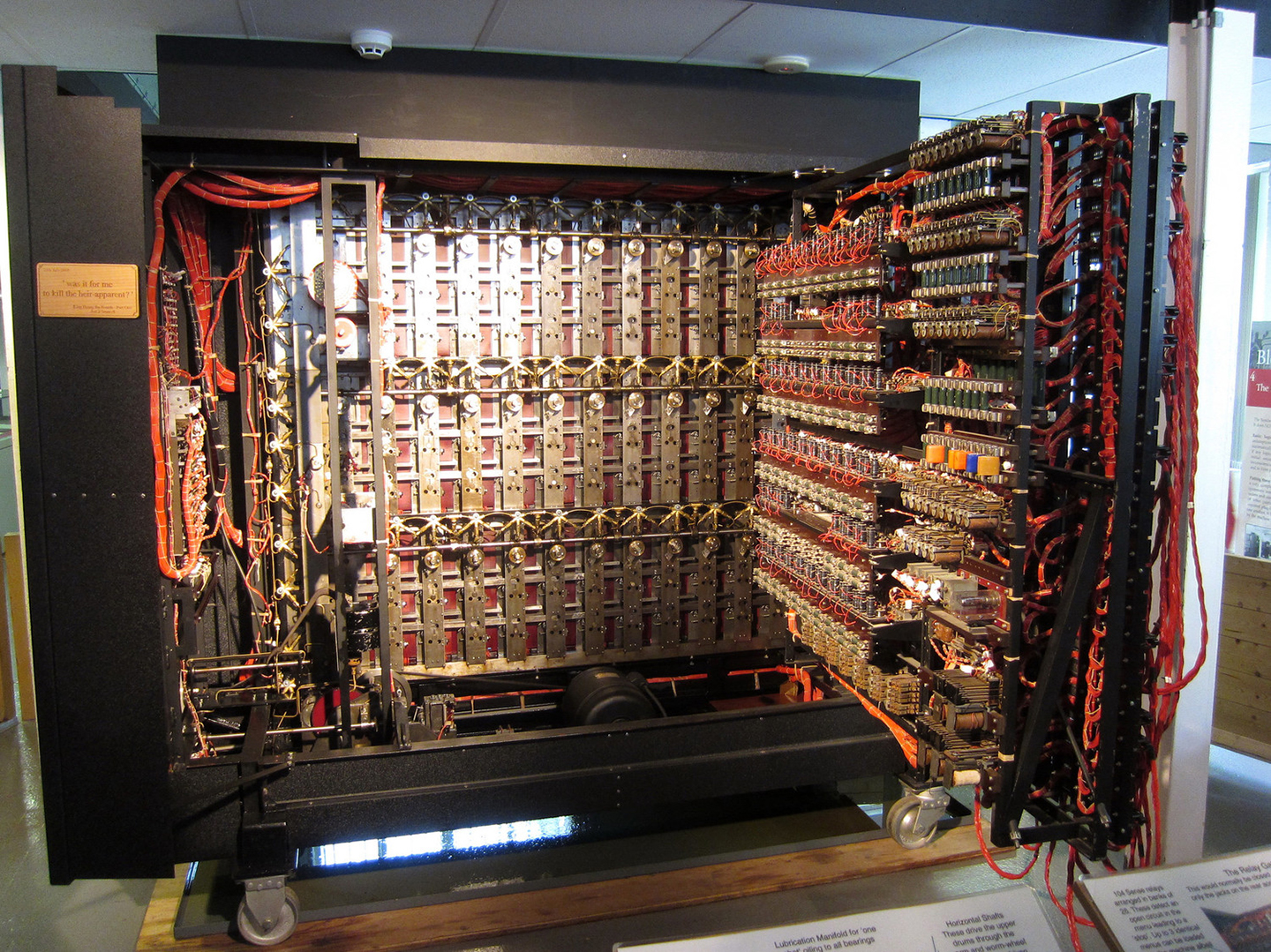

It’s hardly news to anyone that we are in the middle of the first image-generation craze in human history. Over the past year, OpenAI, the San Francisco–based artificial intelligence company founded by Elon Musk and Sam Altman, has released two generations of its newest AI: DALL-E, a portmanteau of “Dalí” and “WALL-E.” Building on the company’s last hit release, GPT-3, DALL-E generates images based on a text prompt. Immediately after its January 5, 2021 release, the internet was flooded with generated images. DALL-E gives its users the weird ability to create a photorealistic representation of anything that comes to mind. From the sillier “teddy bears working on AI research on the moon in the ’80s,” to the slightly more serious “Möbius strip as a fractal in the style of MC Esher,” any sentence can suddenly be converted into an image. Some of these images are quite beautiful, and it certainly seems that DALL-E’s pieces have some real aesthetic value. This raises a host of questions: Have we made a machine that can imagine? If so, does it imagine in the same way that we do? And perhaps most distressingly, at least for anyone with a slightly dystopian attitude towards AI, has the age of human art now passed? Have we made the first genius program? Perhaps this truly is an electronic Dalí, as the name suggests. On its website, OpenAI says that its “hope is that DALL-E 2 will empower people to express themselves creatively.” Can self-expression be outsourced to a machine? Can an automatically generated image have anything to do with self-expression? It certainly takes a genius, or an analyst, to guide someone else to self-expression. Think of Socrates, the midwife of philosophical positions.

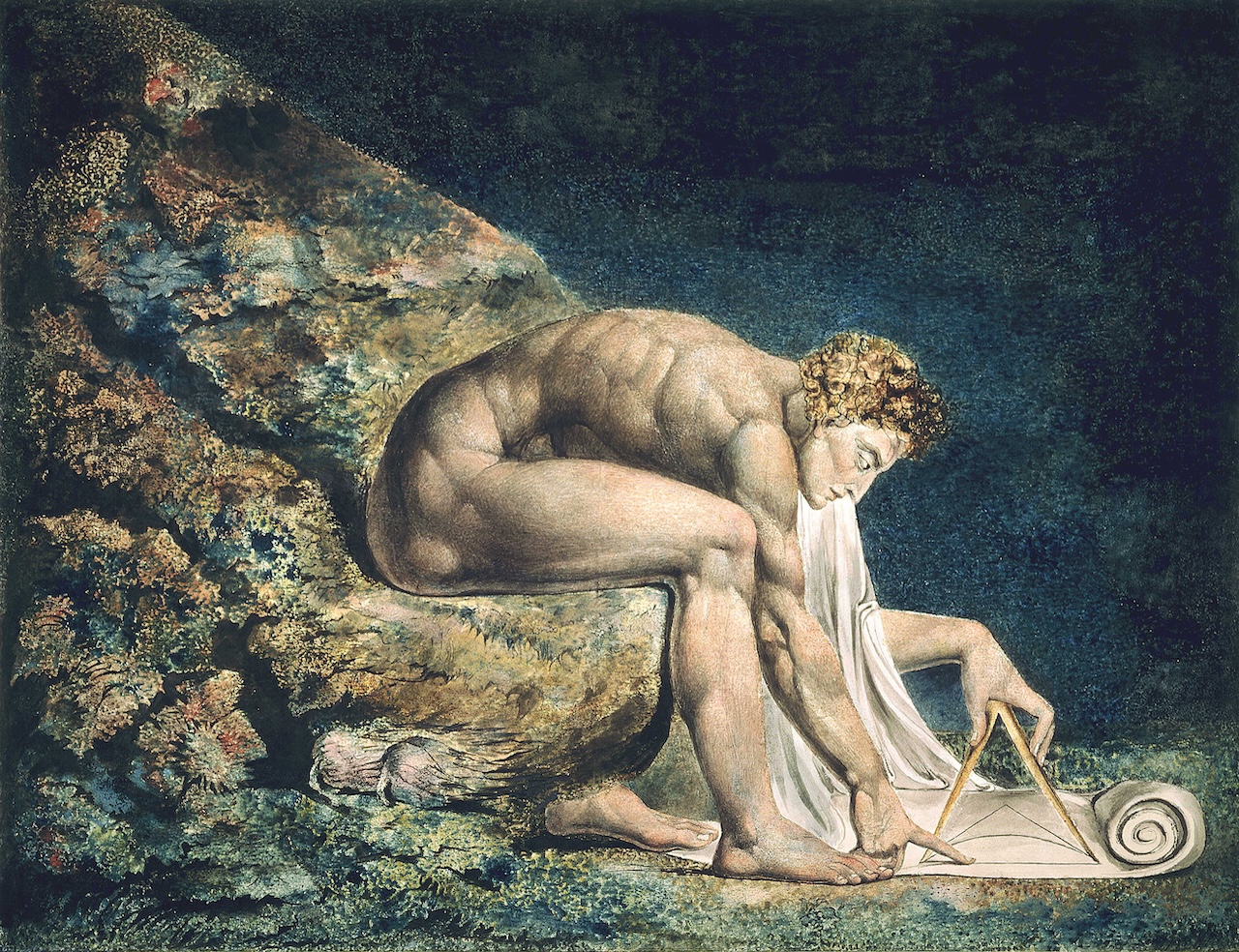

Can DALL-E be said to imagine? If we stick to the “image” in “imagine,” and understand imagination as an image-creating faculty, as with the German Bildungskraft, then certainly yes. That is exactly what DALL-E excels at. DALL-E can imagine in much more detail than we can, and the figments of its imagination are far more impressive. As ridiculous as it is to say, DALL-E imagines better than we do. Still there is a crucial difference in the way it makes its images. While in a sense better, it is far more important that it imagines differently from us. DALL-E’s neural-networked imagination is qualitatively different to ours. François Chollet, a prominent AI researcher at Google and the developer responsible for Keras, a widely used deep learning toolkit, recently tweeted about these differences, writing: “Humans are largely unable to reproduce the visual likeness of something. But they know what the parts are (2 wheels + 2 pedals + handlebar + saddle). On the other hand, a [deep learning] model is excellent at reproducing local visual likeness (what it’s fitted on), yet it has no understanding of the parts & their organization.”1

This difference is fascinating: while we imagine with reference to conceptual schema, a model like DALL-E seems to work by texture (hence the photorealism). This bears on a fundamental difference between how we understand concepts and how a model does (if it does). For us, a concept becomes applicable to experience via a schema; this is a central tenant in Kant’s schematism, which allows the imagination to mediate between concepts of the understanding and intuitions. For us, “the concept of a dog signifies a rule in accordance with which my imagination can specify the shape of a four-footed animal in general.”2 DALL-E doesn’t correlate “dog” with a rule for constructing dogs, but the common color patterns of faces, legs, skin, and fur. We might briefly say that DALL-E imagines with content, where we imagine with form. It imagines better, because the quality of the image is its essence for DALL-E, while for us the image only requires certain formal features in order to satisfy. This is a manifestation of the usual difference with these models, which is that they’re entirely detail-oriented and have no rough sense of the whole. To say that a dog has four legs requires a rough sketch of what kind of animal a dog is, and models only focus on the structure of the encoded information. For us, a concept is abstract; for a model, it is an amalgamation of concrete things.

Does content-focus prevent DALL-E from being a genius artist? If I told you of a person who can create beautiful and vivid images of anything you ask of them, even perfectly imitating the style of other artists, the word “genius” would not be far off your tongue. Still, it seems an utterly inappropriate description of DALL-E. In fact, the only times I’ve seen it attributed to DALL-E have been in “comic genius.” Why is that? What is it that a genius artist does that’s so foreign to DALL-E? The genius artist sets an aesthetic standard, opening up new avenues of sensibility by showing, within the work itself, an exemplar of the logic of this new kind of sensibility. The genius in this sense is an instructive figure whose primary educational tool is the work of art. DALL-E may teach us many things about the generative capacities of machines, but it is instinctively obvious that it has nothing to teach us about our own sensibilities. Looking at DALL-E’s generated images, one doesn’t get the sense that there is a whole lot to be learned about beauty. One marvels at the pristine technical work. This harkens back to something common to our expectations of AI, that it be an alien and independent producer of meaning. On that front it is almost certainly doomed to disappoint. Where the lack of meaning we experience calls us to create meaning, the absence of meaning has some positive, productive presence; the lack of meaning in a deep learning model remains entirely negative, it can do nothing with it.

So, if not an artistic genius, why do some get the sense that DALL-E is “comic genius”? DALL-E opens some interesting and dangerous avenues of human endeavor, mostly concerning the fate of visual artists in our society. But we should focus on what it is now, on actually existing AI, so to speak. Currently, DALL-E could best be characterized as a meme-machine. Most of the content made with DALL-E is what you call “high effort memes”: something stupid that requires a lot of work to make. (There’s the famous example of “the Bee movie but every time someone says bee it gets faster,” and its many, many variants.)3 As Wikipedia has it: “Most coverage of DALL-E focuses on a small subset of ‘surreal’ or ‘quirky’ outputs.”4 This is no accident. When presented with something that will convert our wildest thoughts into images, the first instinct is to make something absurd. (This is, of course, only true because OpenAI has disallowed pornographic prompts. After porn, play is the most popular use of technology.) So we ask it for an avocado sitting in a lawn chair, Hegel on a bagel, or two baked potatoes having a business meeting. These purposefully silly prompts have an interesting effect when put into a powerful, state-of-the-art piece of technology. There is an inherent incongruence about it. The material is absolutely ridiculous, while the machine operating it is as serious as it gets. The highest is conjoined to the lowest. As Herbert Spencer points out in his Physiology of Laughter (1860), that’s generally quite funny. As it stands now, for us, DALL-E is an invitation to play, and playing with something very serious is funny. DALL-E might be a comic genius because it can turn plain old nonsense into comedy gold, in the manner of a good comic actor. At the end of the day, for both it’s all about performance.